Critical Weaknesses in AI Perception: How Systems Can Misinterpret

The perception layer, while fundamental to agentic AI systems, represents one of the most vulnerable components in the autonomous intelligence stack. Understanding how perception can fail is crucial for developing robust, reliable AI agents that can operate safely in real-world environments. These failures can range from subtle misinterpretations to catastrophic misclassifications that lead to dangerous autonomous decisions.

The Nature of Perception Failures

AI perception failures occur when the system's interpretation of environmental data diverges significantly from ground truth or human understanding. Unlike traditional software bugs that produce consistent, predictable errors, perception failures often manifest as context-dependent vulnerabilities that emerge under specific conditions the system wasn't adequately trained to handle.^1^3

AI perception failure modes including bias, misclassification, and pattern recognition errors

These failures stem from fundamental limitations in how AI systems process and interpret sensory information. While humans rely on decades of experience, contextual understanding, and common sense reasoning, AI perception systems depend entirely on pattern recognition learned from training data. This creates inherent fragilities that can be exploited or triggered by environmental conditions that fall outside the system's learned experience.^4

Major Categories of Perception Failures

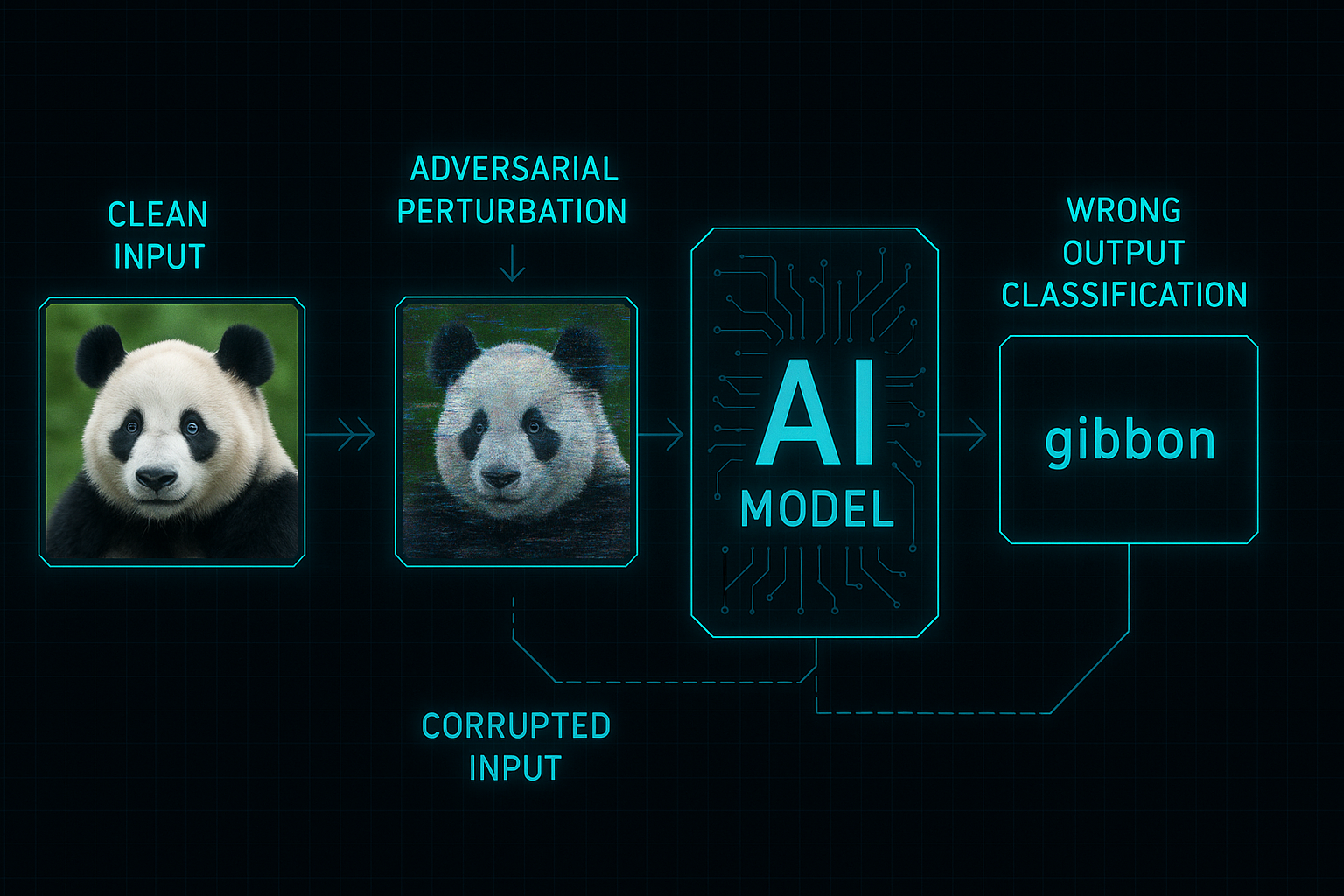

1. Adversarial Attacks and Manipulation

Adversarial attack demonstration showing how small perturbations can fool AI perception systems

Adversarial attacks represent one of the most concerning categories of perception failures, where malicious actors deliberately craft inputs designed to fool AI systems. These attacks exploit the mathematical properties of neural networks to create inputs that appear normal to humans but cause systematic misclassification by AI systems.^5^7

Digital Adversarial Examples: Researchers have demonstrated that adding imperceptible noise to images can cause state-of-the-art vision systems to misclassify with high confidence. For example, an image of a panda can be modified with invisible perturbations to be classified as a gibbon with 99.3% confidence, while remaining visually identical to human observers.^7

Physical-World Attacks: More concerning are physical adversarial attacks that work in real-world conditions. Autonomous vehicles have been fooled by adversarial patches placed on stop signs, causing them to misclassify critical traffic signals. Similarly, researchers have created adversarial glasses that can fool facial recognition systems or adversarial patches that make pedestrians "invisible" to object detection systems.^9

Universal Perturbations: These attacks are particularly dangerous because they work across multiple inputs and different AI models. Once created, a universal adversarial patch can consistently fool various AI systems, making them a scalable attack vector.^10

2. Bias and Fairness Failures

Training Data Bias occurs when the data used to train perception systems doesn't adequately represent the real-world diversity the system will encounter. Commercial facial recognition systems have exhibited substantial racial and gender biases, performing significantly worse for women and people with darker skin tones due to underrepresentation in training datasets.^11^13

Algorithmic Bias emerges when AI systems learn and amplify subtle biases present in training data. For instance, image generation systems like Stable Diffusion consistently over-represent white males when prompted to generate images of professionals, reinforcing societal stereotypes. This bias becomes particularly problematic when these systems influence human decision-making, creating feedback loops that amplify discriminatory patterns.^12

Contextual Bias manifests when AI systems make assumptions based on spurious correlations rather than meaningful features. Medical AI systems have been caught learning to identify X-ray machines rather than medical conditions, relying on hospital-specific identifiers embedded in images rather than actual diagnostic indicators.^14

3. Distribution Shift and Generalization Failures

Domain Adaptation Failures occur when AI systems trained in one environment encounter significantly different conditions. A medical diagnostic system trained on data from North American hospitals may perform poorly in Southeast Asian healthcare settings due to demographic differences and varying disease presentations.^15^16

Temporal Drift happens when the statistical properties of real-world data change over time, while the AI system remains static. Financial fraud detection systems may fail to identify new fraud patterns that emerge after their training period, as attackers adapt their techniques to evade detection.^16

Out-of-Distribution Detection Failures represent cases where AI systems fail to recognize when they encounter inputs that fall outside their training distribution. Instead of indicating uncertainty, these systems often make confident but incorrect predictions about unfamiliar scenarios.^3

4. Environmental and Input Quality Issues

Sensor Degradation and environmental interference can significantly impact perception accuracy. Autonomous vehicles' perception systems can be compromised by weather conditions, lighting changes, or sensor malfunctions that weren't adequately represented in training data.^1

Data Quality Issues include corrupted inputs, missing information, or noisy sensor data that the perception system cannot properly interpret. According to MIT research, the ten most cited AI datasets contain substantial labeling errors, including images of mushrooms labeled as spoons, which can lead to systematic misclassification.^16

Multi-Modal Integration Failures occur when AI systems struggle to properly combine information from different sensory inputs. While humans naturally integrate visual, auditory, and contextual information, AI systems often process these modalities separately, missing important correlations that could improve accuracy or detect anomalies.^1

5. Model Architecture and Training Limitations

Shortcut Learning represents a fundamental failure mode where AI systems learn to rely on easier but incorrect solutions rather than robust features. Instead of learning the true underlying patterns that define objects or concepts, the system identifies spurious correlations that happen to work in training but fail in real-world deployment.^14

Overfitting to Training Conditions occurs when models perform exceptionally well on training data but fail to generalize to new scenarios. This is particularly problematic in perception systems that must handle the infinite variability of real-world environments.^3

Black Box Limitations make it difficult to understand why perception systems fail, preventing effective debugging and improvement. The lack of interpretability means that failure modes often remain hidden until they manifest in critical situations.^1

Psychological and Human Factors in Perception Failures

Expectation Misalignment occurs when humans expect AI perception systems to be perfect and consistent, leading to excessive trust followed by dramatic disappointment when failures occur. Users often have unrealistic expectations about AI capabilities, assuming these systems are more reliable than they actually are.^4

Automation Bias causes humans to over-rely on AI perception systems, potentially missing errors that human oversight could catch. Conversely, algorithmic aversion can develop after users experience AI failures, leading to rejection of beneficial AI assistance.^4

Human-AI Feedback Loops can amplify perception biases over time. When humans interact with biased AI systems, they often unknowingly adopt these biases, creating a reinforcement cycle that makes the problems worse.^11

Consequences of Perception Failures

The impact of perception failures varies dramatically depending on the application context:^18

Safety-Critical Applications: In autonomous vehicles, medical diagnosis, or industrial automation, perception failures can result in injuries, fatalities, or significant property damage.^9

Economic Impact: Financial institutions report significant losses from AI systems that fail to detect new fraud patterns or misclassify legitimate transactions.^14

Social and Ethical Consequences: Biased perception systems can perpetuate discrimination in hiring, criminal justice, and access to services, creating systemic unfairness.^12

Security Vulnerabilities: Perception failures create attack vectors that malicious actors can exploit to compromise AI-dependent systems.^5

Mitigation Strategies and Current Limitations

Adversarial Training involves exposing AI systems to adversarial examples during training to improve robustness, but this approach faces fundamental trade-offs between robustness and accuracy.^19

Diverse Training Data and careful dataset curation can reduce bias and improve generalization, but complete representation of real-world diversity remains challenging.^12

Ensemble Methods and uncertainty quantification help identify when perception systems are likely to fail, though they increase computational requirements significantly.^15

Human-in-the-Loop Systems maintain human oversight for critical decisions, but this approach can be limited by automation bias and the difficulty of maintaining human attention over time.^4

The fundamental challenge remains that current AI perception systems lack the robust, contextual understanding that humans possess. While significant progress has been made in identifying and addressing specific failure modes, the development of truly robust AI perception systems that can handle the full complexity and variability of real-world environments remains an active area of research and development.^3

Understanding these failure modes is crucial for organizations deploying agentic AI systems, as perception failures can cascade through the entire autonomous decision-making process, potentially leading to catastrophic outcomes in critical applications. ^20^22^24^26^28^30^32^34^36^38^40